A group of U of T computer scientists wants to make it easier for content creators to film how-to videos.

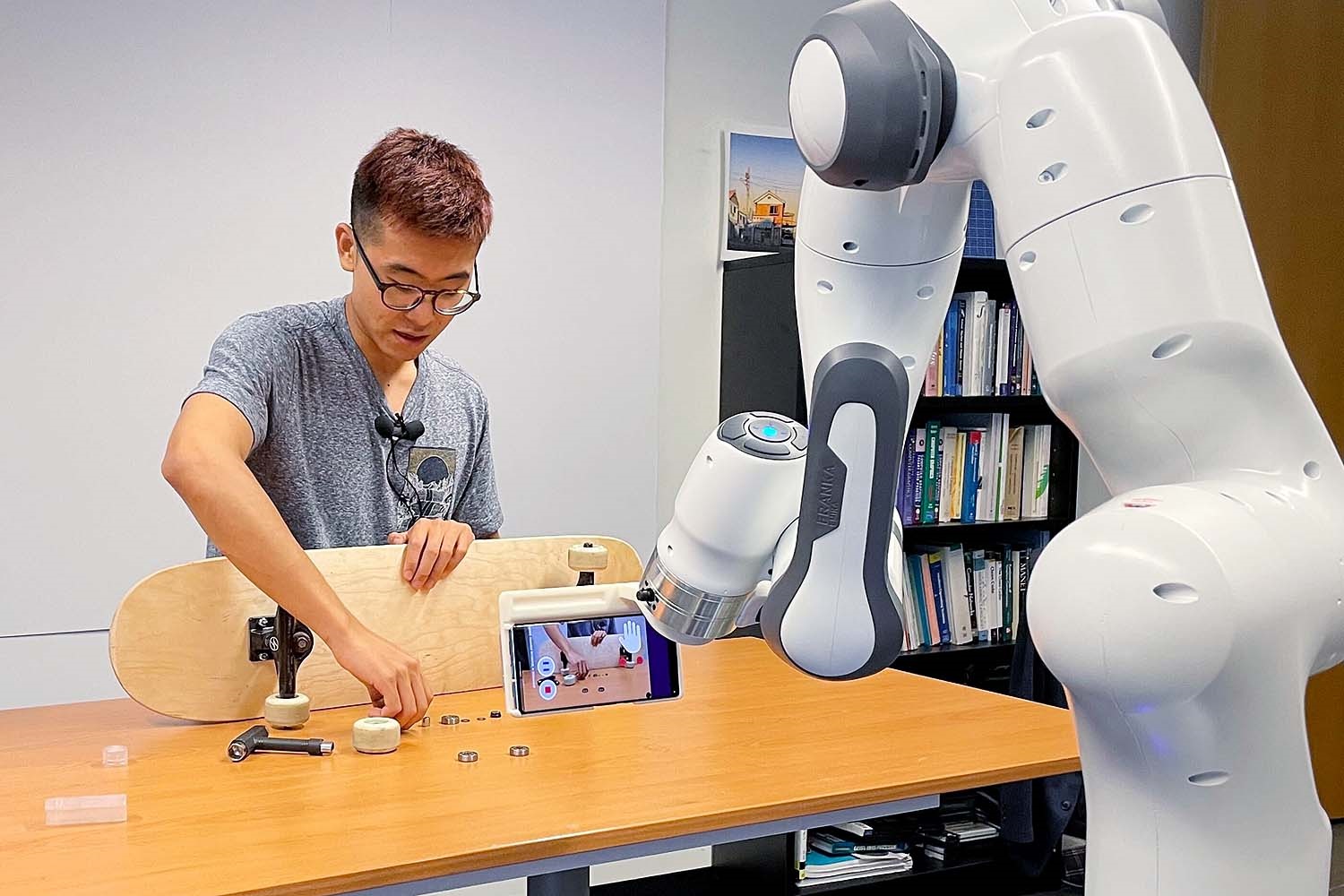

Looking to foster closer human-robot collaboration, they have developed Stargazer, an interactive camera robot that helps instructors create engaging tutorial videos demonstrating physical skills.

For instructors or creators without access to a cameraperson, Stargazer can capture dynamic instructional videos and address the constraints of working with static cameras.

“The robot is there to help humans, but not to replace humans,” explains lead researcher Jiannan Li, a PhD candidate in the Department of Computer Science in the Faculty of Arts & Science. “The instructors are here to teach. They have their own skills and personalities to attract their students or their fan base. The robot’s role is to help with filming — the heavy lifting work,” he says.

This work is outlined in a published paper, Stargazer: An Interactive Camera Robot for Capturing How-To Videos Based on Subtle Instructor Cues presented this year at the Association for Computing Machinery Conference on Human Factors in Computing Systems (CHI), a leading international conference in human-computer interaction.

Li’s co-authors include fellow members of the Dynamic Graphics Project (dgp) lab: postdoctoral fellow Mauricio Sousa, PhD students Karthik Mahadevan and Bryan Wang, and professors Ravin Balakrishnan and Tovi Grossman; as well as cross-appointed professor Anthony Tang, recent U of T Faculty of Information graduates Paula Akemi Aoyaui and Nicole Yu; and third-year computer engineering student Angela Yang.

Stargazer’s single camera is on a robot arm with seven independent motors that can move along with the video subject by autonomously tracking regions of interest. The system’s camera behaviours can be adjusted based on subtle cues from instructors, such as body movements, gestures and speech that are detected by the prototype’s sensors.

The instructor’s speech is recorded with a wireless microphone and sent to a speech recognition API, Microsoft Azure Speech-to-Text. The transcribed text, along with a custom prompt, is then sent to a large language model, GPT-3, which labels the instructor’s intention for the camera, such as a standard vs. high angle and normal vs. tighter framing.

These camera control commands are cues naturally used by instructors to guide the attention of their audience and are not disruptive to instruction delivery, the researchers say.

For example, the instructor can have Stargazer adjust its view to look at each of the tools they will be using during a tutorial by pointing to each one, prompting the camera to pan. The instructor can also say to viewers, “Now if you look at how I put ‘A’ into ‘B’ from the top,” Stargazer will respond by framing their action with the items with a high angle to give the audience a better view. The instructor can control zoom-in by saying sentences that suggest taking a closer look, like, “Pay more attention to how I take the lid off.”

In designing the interaction vocabulary, the team wanted to identify signals that are smooth, subtle and avoid the need for the instructor to communicate separately to the robot from the audience.

“The goal is to have the robot understand in real time what the human instructor wants, what kind of shot they want. The important part of this goal is that we want these vocabularies to be non-disruptive. It should feel like they fit into the tutorial,” says Li.

Stargazer’s abilities were put to the test by the team in a study involving six instructors, each teaching a distinct skill to create dynamic tutorial videos.

Using Stargazer, they were able to produce videos demonstrating physical tasks on a diverse range of subjects, from skateboard maintenance to interactive sculpture making to setting up virtual reality headsets, while relying on the robot for subject tracking, camera framing and camera angle combinations.

The participants were each given a practice session and completed their tutorials within two takes. The researchers reported all of the participants were able to create videos without needing any additional camera controls than what was provided by the robotic camera and were satisfied with the quality of the videos produced.

While Stargazer’s range of camera positions is sufficient for tabletop activities, the team is interested in exploring the potential of camera drones and robots on wheels to help with filming tasks in larger environments from a wider variety of angles.

They also found some study participants attempted to trigger object shots by giving or showing objects to the camera, which were not among the cues that Stargazer currently recognizes. Future research could investigate methods to detect diverse and subtle intents, they say, by combining simultaneous signals from an instructor’s gaze, posture and speech, which Li says is a long-term goal the team is making progress on.

Li explains the team is also looking to incorporate more expansive language. He points out Stargazer has a limited set of vocabulary to ensure there is a clear response from the robot and would not be misinterpreted. However, he notes, in its current form, that means the instructors need to be particular in their language choice which can be more cognitively taxing than the non-verbal cues.

While the team presents Stargazer as an option for those who do not have access to professional film crews, the researchers admit the robotic camera prototype relies on an expensive robot arm and a suite of external sensors.

However, Li says the Stargazer concept is not limited by costly technology.

“I think there’s a real market for robotic filming equipment, even at the consumer level. Stargazer is expanding that realm but looking farther ahead with a bit more autonomy and a little bit more interaction. So, I think realistically, it can be available to consumers,” Li adds.

He says the team is excited by the possibilities Stargazer presents for human-robot collaboration.

“For robots to work together with humans, the key is for robots to understand humans better. Here, we are looking at these vocabularies, these typically human communication behaviours,” Li explains. “We hope to inspire others to look at understanding how humans communicate, how they would feel natural and not burdened and how robots can pick that up and have the proper reaction, like assistive behaviours.”